Describing the full technical workflow including every tool, script, and edge case would easily fill an article on its own. Instead, this section focuses on the approach and production flow used in this project, outlining how generative AI is integrated into a reliable, event-ready pipeline.

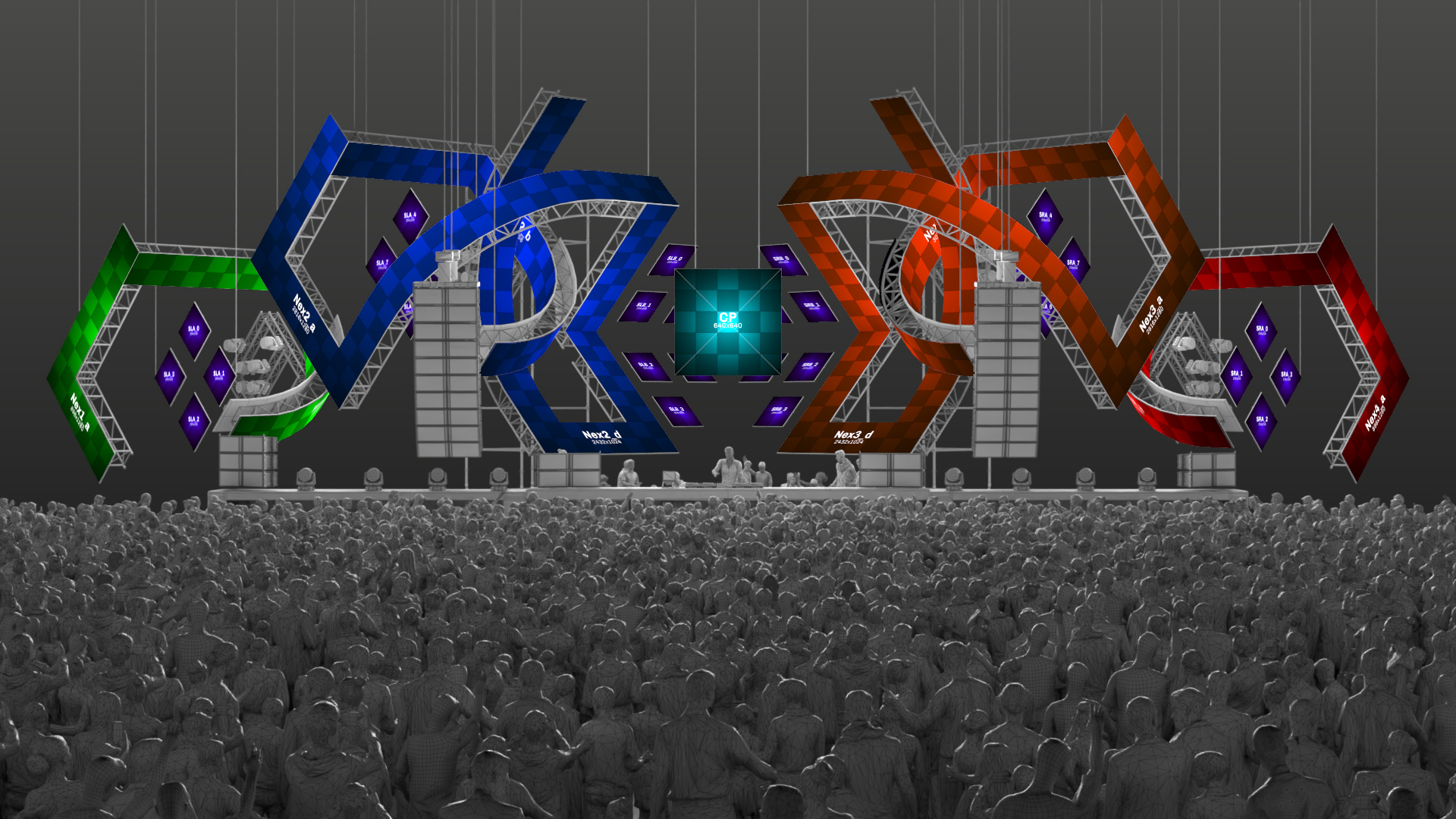

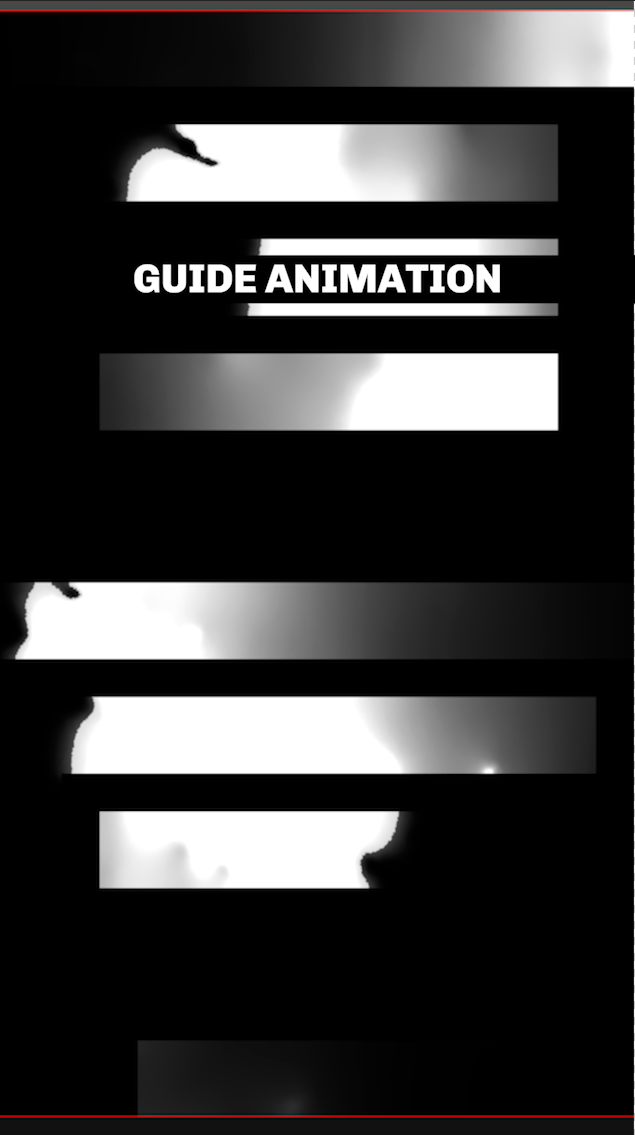

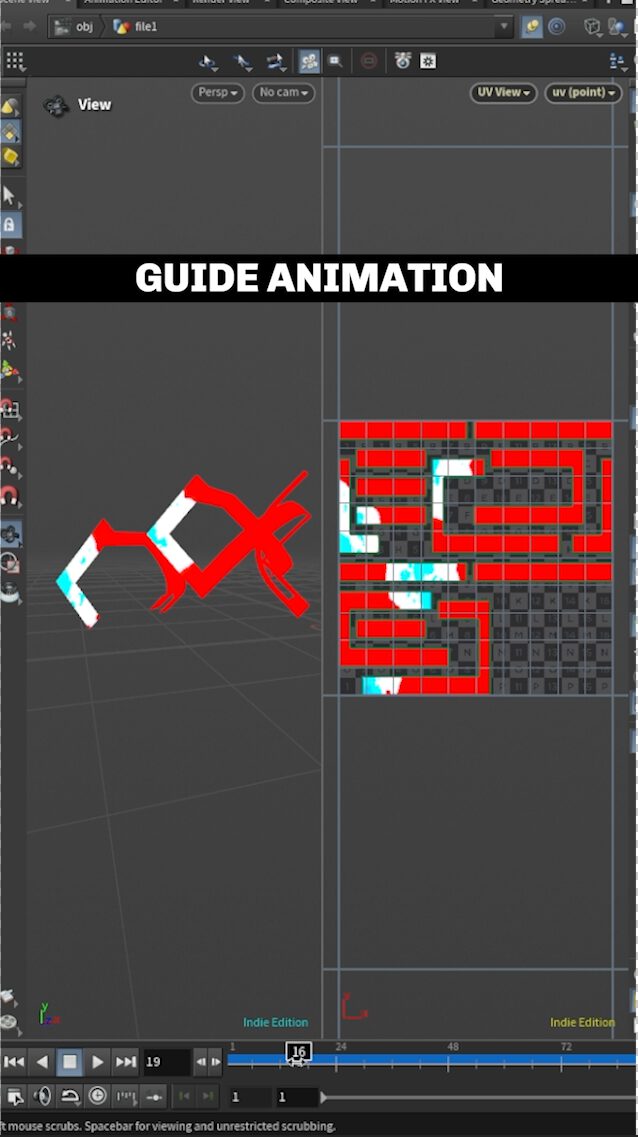

The process starts with defining the pixelmap and matte animation. All timing, structure, and motion are created first on the full canvas, covering the entire show or separate effects. This step establishes the spatial logic of the setup early on, ensuring that motion, rhythm, and transitions already work at the stage level before any AI generation is introduced.

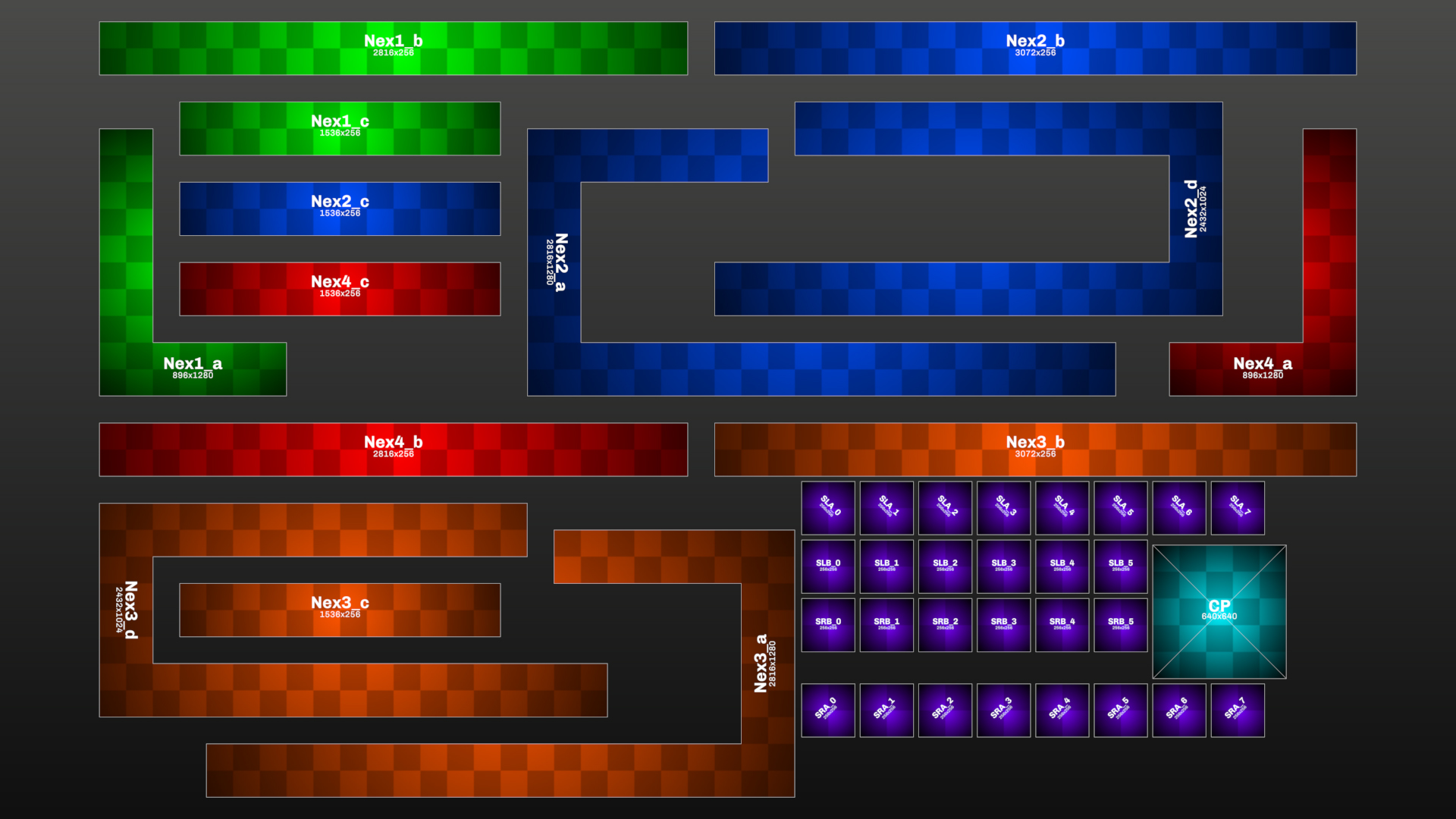

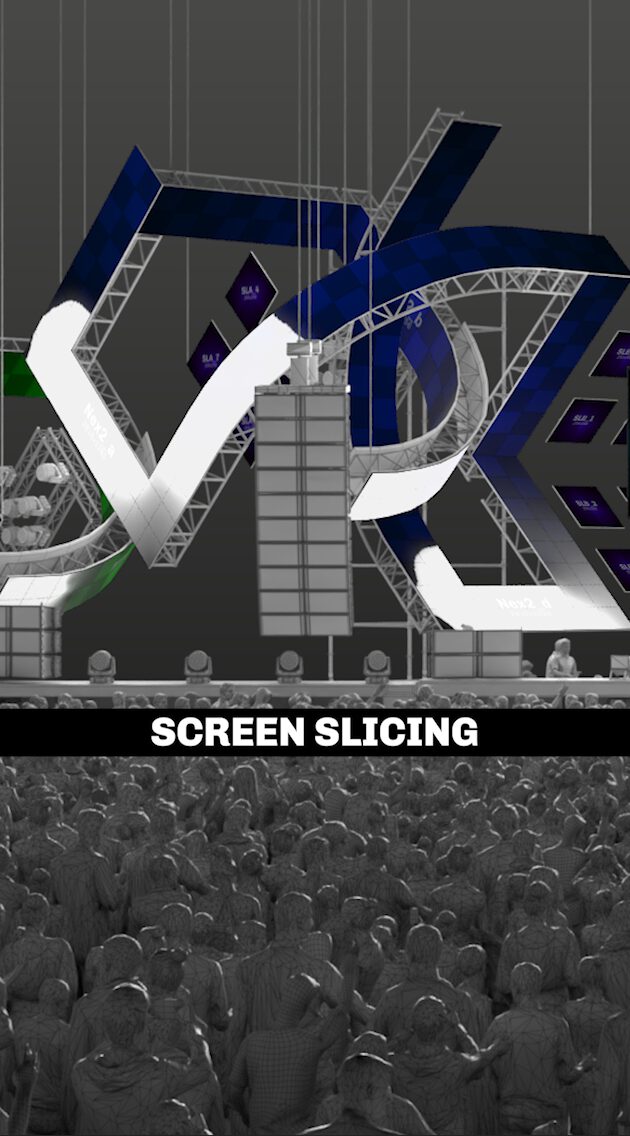

Once the base animation is locked, the pixelmap is broken down into individual slices. These slices are carefully cropped and framed so they fit into resolutions that AI models can handle efficiently while still allowing for high-quality output. Each slice represents a controlled window of the larger canvas rather than an isolated visual element.

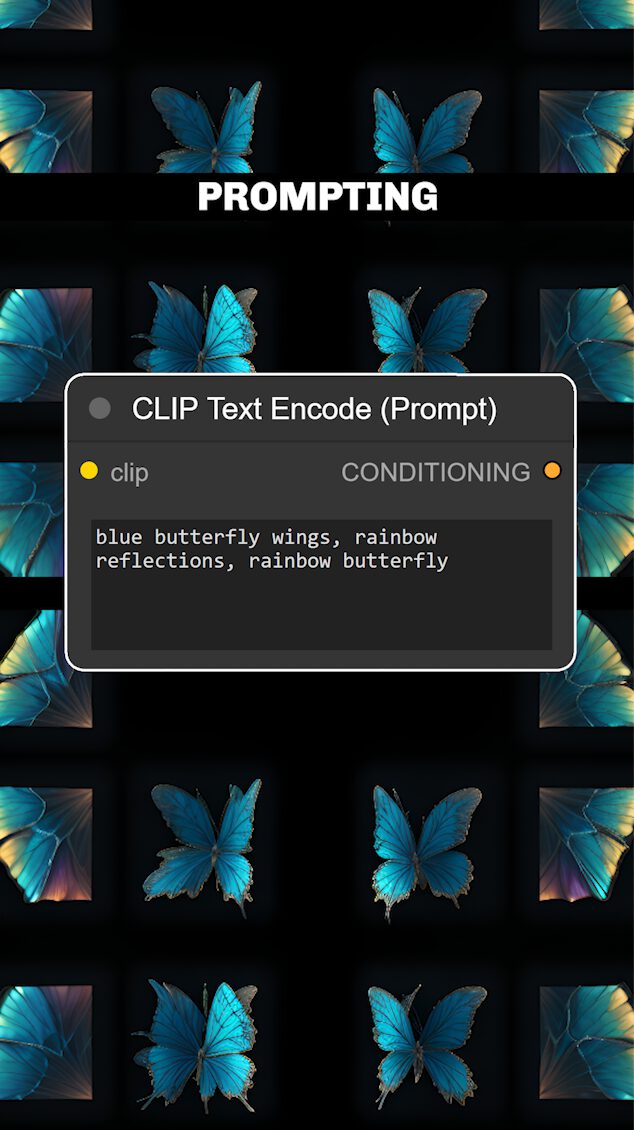

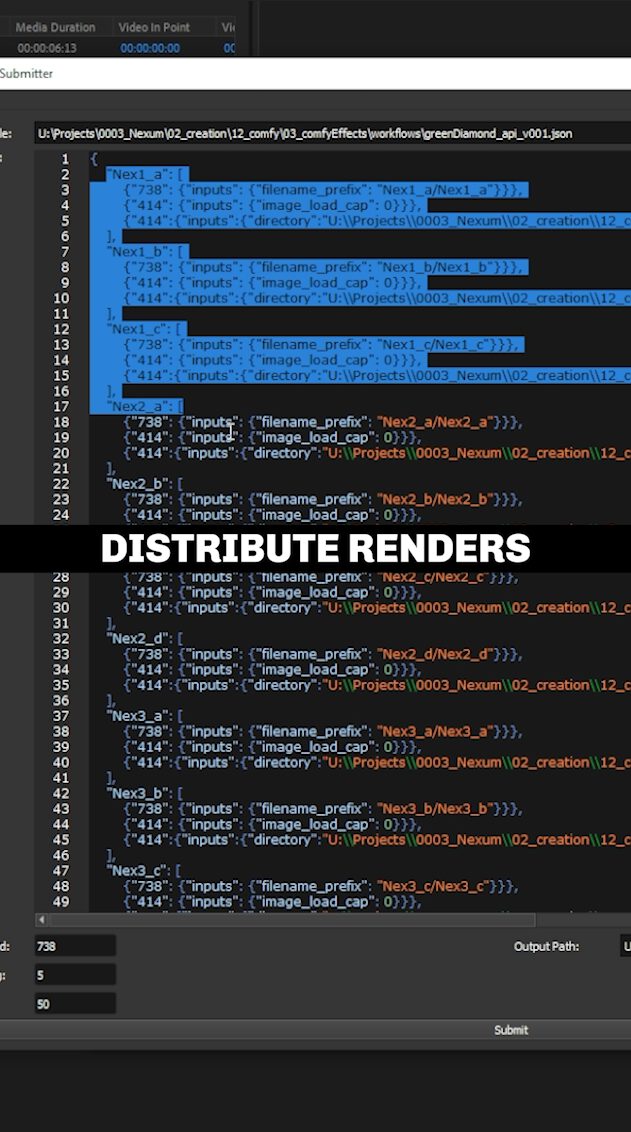

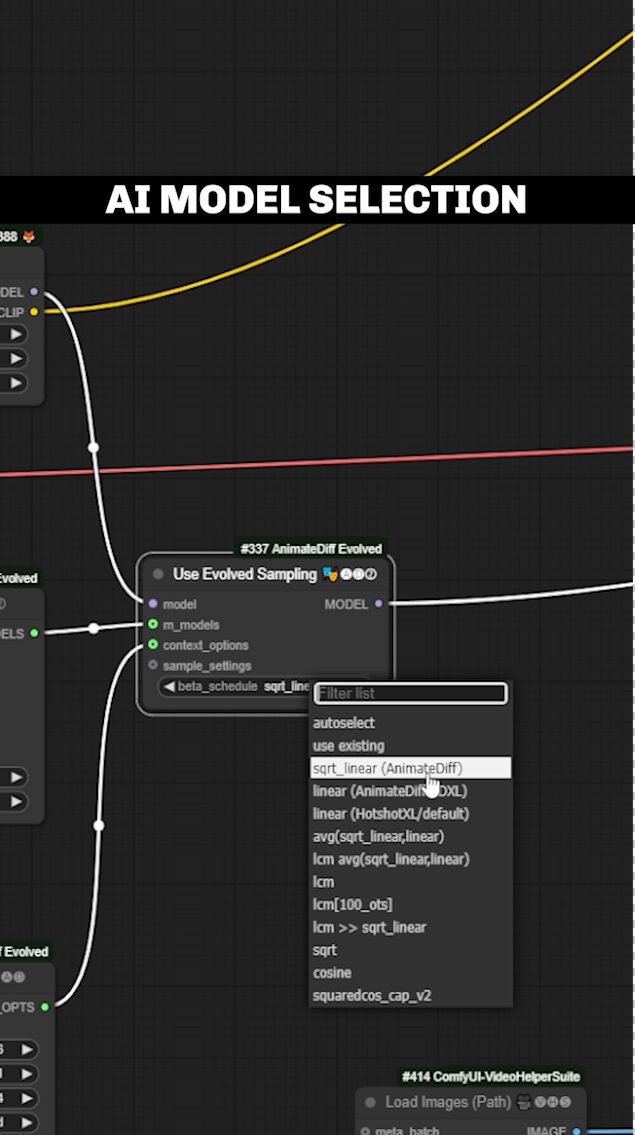

From there, the pipeline moves into ComfyUI, where style and generative identity are applied. Instead of generating visuals blindly, the AI operates within predefined spatial and temporal constraints. To make this scalable, I developed custom tools that integrate the Deadline render manager, allowing the sliced renders to be distributed across multiple machines. This significantly reduces render times and makes high-resolution, multi-surface generation feasible in production environments.

After all slices are rendered, the pipeline returns to Fusion, where the entire mapping is reconstructed. Each generated segment is reassembled into its original position on the pixelmap, restoring the full spatial context. The final result can be used as a layered visual effect or as a complete, timecode show, ready to sync with lighting, media servers.

This hybrid pipeline balances the creative potential of generative AI with the predictability and control required for live events, making it possible to use AI not just as an experiment, but as a dependable production tool.